On the Global Investigative Journalism Network, Nael Shiab looks at the question of journalistic ethics in gathering data via web scraping. The article considers the moral aspects of whether and how to identify yourself as a journalist, whether to open-source the code you use for your bot, and so forth.

On the Global Investigative Journalism Network, Nael Shiab looks at the question of journalistic ethics in gathering data via web scraping. The article considers the moral aspects of whether and how to identify yourself as a journalist, whether to open-source the code you use for your bot, and so forth.

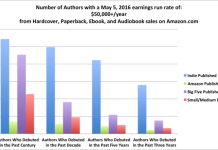

It’s an interesting article, perhaps the more so for that it put me in mind of Hugh Howey’s Author Earnings project, which attempts to lift the veil on self-, independently-, and traditionally-published book sales data on Amazon and other sites. That project uses extensive web scraping to gather its data, and effectively generates lengthy reports that read as long-form independent journalism. I don’t know if Author Earnings makes its bot code available, but it does release all the raw data it gathers so others can do their own analyses.

It’s funny the changes computers have brought to all aspects of life, not least of all journalism. The proliferation of web sites that make huge amounts of data public has caused a branch of investigation to develop centered around gathering that information quickly and efficiently. The GIJN article does not even stop to ponder the idea of web scraping existing; it takes that for granted and focuses on the most ethical ways of doing it. Oh brave new world that has such creatures in it.

Imagine if authors were still paid by the word. How might computers inflate the word count? How purple would prose become? Might an author develop a framework that would launch a dozen or more novels?

@Frank: Maybe you’ve just discovered Patterson’s true secret. All those co-writers, maybe they’re just aliases for different computer programs! 🙂