As longtime readers will know, I’m a huge fan of handwriting recognition on mobile devices. I still regard this as one of the best, most underappreciated forms of mobile input, for everything from searching through an ebook to writing whole novels on the move. Now new developments in software AI have opened the door for possible radical improvement in HWR efficiency.

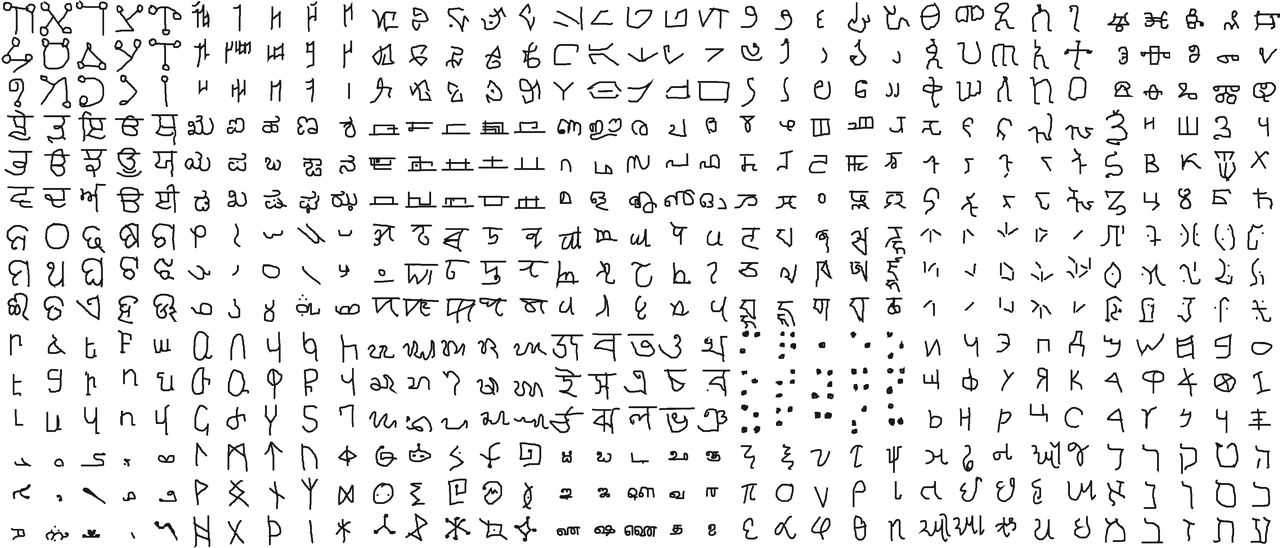

Just published in the journal Science, the paper describing the research, “Human-level concept learning through probabilistic program induction,” by Brenden M. Lake, Ruslan Salakhutdinov and Joshua B. Tenenbaum, outlines the use of new algorithms to mimic human learning, specifically the understanding of written characters. “We present a computational model that captures these human learning abilities for a large class of simple visual concepts: handwritten characters from the world’s alphabets,” the summary explains. As you can see from the illustration above, courtesy of Science magazine, the researchers swept a pretty wide field of languages and character types. All the same, “on a challenging one-shot classification task, the model achieves human-level performance while outperforming recent deep learning approaches.”

As HWR software users know, most programs currently in use gather examples and user data to improve recognition efficiency. “Both images and pen strokes were collected,” the paper explains. “Handwritten characters are well suited for comparing human and machine learning on a relatively even footing: They are both cognitively natural and often used as a benchmark for comparing learning algorithms.” The paper claims a considerable improvement over existing systems to say the least. “Our results show that this approach can perform one-shot learning in classification tasks at human-level accuracy.”

Of course, at this stage the research is far away from being implemented in actual programs and apps. However, it has already been picked up by media focusing on its potential for machine learning, in areas such as speech recognition, text-to-speech, OCR, or real-time translation. Obviously, many of these are applicable to ereading technology and interfacing with mobile devices in general. Imagine Apple’s Siri or Microsoft’s Cortona juiced up with this kind of software.

As it happens, today’s HWR technology is already pretty good, often beating everyday lay users’ typing skills in accuracy. However, better is always … better, and I look forward to seeing what fruit these new developments bear.