Need an imagination? There’s an app for that. Or soon will be.

Among the wackier wheezes worked up lately to improve on plain old Mark One printed text is a patent application filed by Bill Gates, Nathan Myhrvold, and a bunch of other Washington State dreamers to autoconvert text to video.

“A system for converting user-selected printed text to a synthesized image sequence, comprising: processing electronics configured to receive an image of text over a network, to translate the text of the image of text into a machine readable format, and, in response to receiving the image, to generate model information based on the text translated into the machine readable format.”

As detailed in the application, there’s somewhat more substance to the proposal than appears at first blush, especially in the context of education. “School textbooks are notorious for their dry presentation of material,” it runs. “Paintings or photographs are often included in the textbook to maintain the student’s interest and to provide context to the subject matter being conveyed. However, due to limited space, only a limited number of images may be included in the textbook. Further, students with dyslexia, attention deficit disorder, or other learning disabilities may have difficulty reading long passages of text. Thus, there is a need for improved systems and methods of conveying the subject matter underlying the text to a reader.”

I cut my teeth on digital editing as a humanities editor on the first CD-ROM generation of Microsoft Encarta in the UK back in the 1990s, so I know how dear education and visually-assisted learning is to the sages of Redmond. The Bill & Melinda Gates Foundation, with its strong education focus, also stands witness to that. All the same, to my mind this looks like a solution in search of a problem. Enough teaching is already done with AV aids or live streaming. Does the world need this too?

I cut my teeth on digital editing as a humanities editor on the first CD-ROM generation of Microsoft Encarta in the UK back in the 1990s, so I know how dear education and visually-assisted learning is to the sages of Redmond. The Bill & Melinda Gates Foundation, with its strong education focus, also stands witness to that. All the same, to my mind this looks like a solution in search of a problem. Enough teaching is already done with AV aids or live streaming. Does the world need this too?

Plus, despite the detailed outline of the methodology in the patent application, it’s hard to see how this would work. What image bank would the system be working on? How to ensure consistency and reasonable flow? What style would the videos be in, and how closely could they follow the text? The patent application omits reference to those little details.

Still, I wouldn’t want to carp when this may well be one in a string of related patents to fit into a larger whole. It could certainly work well enough when coupled with a pre-assembled image bank. Though once again, it’s hard to see where this scores over straight video or CGI illustration, but anyway …

Given the reams of autocorrect bloopers, meanwhile, it’s a hoot to imagine the possibilities that this could throw up. Especially if they take the adult content filter off. “Fifty Shades of Gates” doesn’t even bear thinking about.

Plus, despite the detailed outline of the methodology in the patent application, it’s hard to see how this would work. What image bank would the system be working on? How to ensure consistency and reasonable flow? What style would the videos be in, and how closely could they follow the text? The patent application omits reference to those little details.

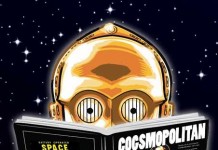

This is an example of how broken our patent system is. It is a patent on an idea. Patents are supposed to be for actual inventions, not ideas or methods of doing something. Even if one were to accept that a method could and should be patented, it would need to be precisely formulated and detailed enough to cover its particular implementation and methodology. If a person competent in the relevant field can not follow the details of the patent, step by step, and produce an actual working model of the “invention”, how can it be anything other than an abstract idea? Can I patent theoretical hyperspace drives, cold fusion energy plants, and time travel? These kinds of patents are about as viable as the Underpants Gnomes schemes:

1) Input text

2) ???

3) Awesome interactive images, video, 3D models and holograms!